Welcome to the mid-week update from New World Same Humans, a newsletter on trends, technology, and society by David Mattin.

If you’re reading this and haven’t yet subscribed, join 22,000+ curious souls on a journey to build a better future 🚀🔮

Here in London, winter is coming. But a collection of remarkable stories means we have a white hot instalment of New Week.

New research shows that large language models such as GPT-3 can accurately simulate the opinions and preferences of real people. It could herald a revolution for the social sciences.

Meanwhile, one handy chart captures the stunning scale of Mark Zuckerberg’s metaverse bet. And researchers grow a tiny brain in a petri dish and watch it teach itself to play Pong.

Let’s get started.

👨 The person simulator

This week, a window opened on yet another strange and powerful aspect of large language models.

Researchers at Brigham Young University published a paper called Out of One, Many: Using Language Models to Simulate Human Samples; it looks at the use of GPT-3 to simulate the opinions of different population groups with a high degree of accuracy.

The researchers conditioned GPT-3 by feeding it response data from multiple large surveys of political and cultural preferences in the US. They found that the AI could then output further opinions and perspectives ‘in ways that are nuanced, multifaceted, and reflects the complex interplay between ideas, attitudes, and socio-cultural context that characterise human attitudes.’

Essentially, GPT-3 is good at simulating people: if you tell it a bit about the opinions of a person, it’s good at predicting other opinions and preferences they’ll hold. Here’s GPT-3 doing that when it comes to US politics:

The researchers suggest this opens up an arresting possibility: silicon sampling. That is, the simulation of people inside language models, who can then be interrogated as a real human population would be.

⚡ NWSH Take: This story sees two NWSH obsessions come together. First, natural language models and our current quest to understand their nature and capabilities. And second, the emergence of new forms of simulation as powerful tools for insight in the 21st-century. // I’ve written repeatedly — most recently in New Week #100 — on the dream when it comes to next-generation simulations. That is, the ability to simulate complex social dynamics and collective human behaviours, including entire societies. The findings in this paper offer an exciting new avenue when it comes to that quest. // The potential use cases are manifold, and hugely consequential. Imagine a future in which political parties test new policy ideas via silicon sampling, before putting them in front of real focus groups. Or where brands put product and service concepts in front of simulated people. The report authors warn that silicon sampling will aid bad actors, too; they’ll use simulated people to test the believability and effectiveness of disinformation campaigns. // Computation and the social sciences just collided in an entirely new way. NWSH will keep watching.

💸 Okay, bet

This week’s Meta Connect event saw Mark Zuckerberg invite us deeper into his vision for the metaverse (you already know this; the coverage was unavoidable).

The company showcased a raft of new technology, including the new Meta Quest Pro, which delivers higher pixel resolution and an improved pass through AR experience.

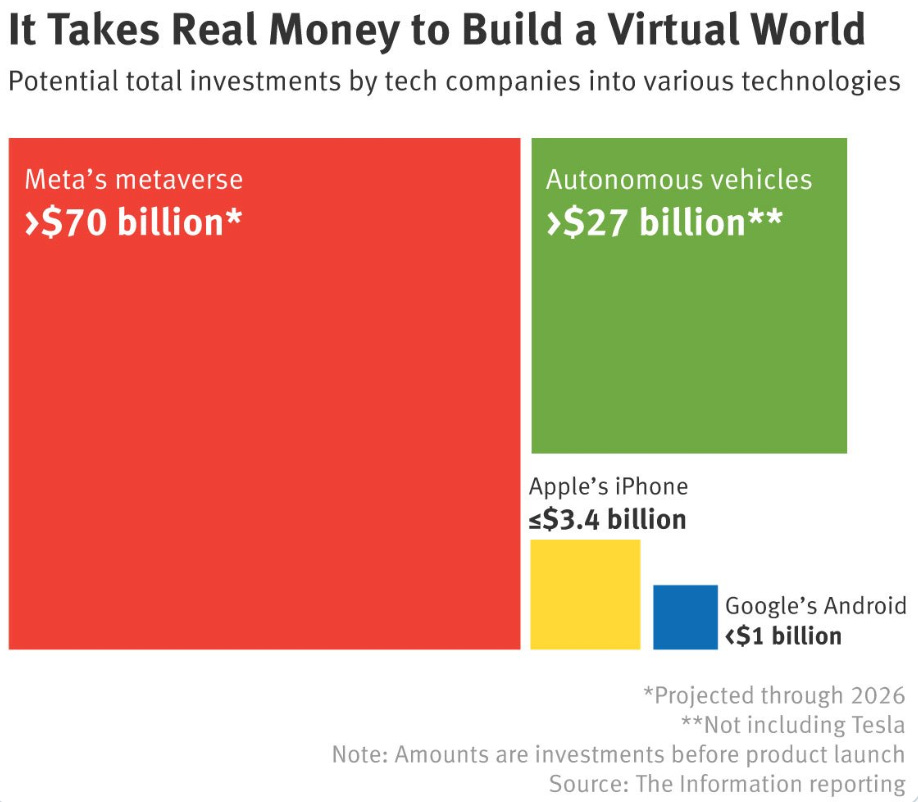

The coverage that most caught my eye? It was this chart from tech publication The Information (£), which shows the scale of the bet that the Zuck is making.

Meta is set to spend an estimated $70 billion on its pivot the metaverse by 2026. According to The Information’s analysis, no tech company has ever invested as much in a new technology or platform before seeing proof of success in the market. It amounts to a calculated gamble that is unprecedented in Silicon Valley, and that may be unprecedented in corporate history.

⚡ NWSH Take: When Facebook went public in 2012, Zuckerberg devised a share class structure that gave him 58% of voting rights. Meta is a corporate monarchy, and now the King is marching his 80,000 subjects towards a new Promised Land. // Critics argue that Meta is embracing this pivot to distract us — consumers and regulators alike — from concerns around disinformation and targeted ads. Look at the scale of this bet, and that idea crumbles. If all the Zuck wanted to do is distract people, there are cheaper ways to do it. What’s happening inside Meta only makes sense in light of a deep, ideological belief that the metaverse is the next — and in some sense final — stage of this journey we’ve all been on for 30 years; our journey into this thing we call the internet. For true metaverse believers, it’s 1995 all over again; the gold rush is only just beginning. // Is Zuckerberg right? No one can know yet; that’s what makes this a bet. But while there are huge questions to answer — and this newsletter will spend many words on those questions in future instalments — the boundaries that separate this world from virtual worlds will become ever more porous in the years ahead. The competition to own that shift will be titanic. One thing is certain: Meta are positioning themselves for a long war.

🧠 Brain pong

This is surely the strangest story ever featured in an instalment of New Week.

Researchers at a life sciences startup called Cortical Labs announced this week that they grew a mini-brain in a dish, and that it then taught itself to play the video game Pong. This story first appeared late in 2021, but this week saw publication of the full research paper in the journal Neuron.

Lab-grown mini-brains, also known as brain organoids, are tiny clumps of neurons cultivated from human stem cells. They were first developed in 2013, and are doing much to help scientists understand the nature and workings of the brain.

But this is the first time brain organoids have been plugged into an external environment and interacted with that environment in a goal-directed way.

Researchers plugged the brain organoid into a ‘simulated game environment’ that sent it information about the position of the paddle and ball. The organoid was motivated to avoid the reset of the game that results when the paddle misses the ball, because this subjects it to a high energy demand. As a result, it started to move the paddle to avoid these resets. Within five minutes the organoid was demonstrating a hit rate way better than random chance.

In other words, it had taught itself to play a 1970s-era video game.

⚡ NWSH Take: The strangest story ever featured in NWSH? It may also turn out to be one of the most consequential. The researchers talk of having created a ‘sentient’ mini-brain. That’s a stretch; most people take sentient to mean conscious and capable of subjective experience. These brain organoids weren’t that. Then again, neither is your phone; but it’s still useful. // In short, this work raises the possibility that in future we’ll grow mini-brains in the lab and leverage their computational power. What’s more, while the brain organoids didn’t get as good at Pong as our best AIs, they did learn the game faster; AIs typically take around 90 minutes to achieve the competence the mini-brains managed in five. Not only, then, will we be able to grow our own compute, but it may prove more effective in some contexts than machine intelligence. The real power may come, though, if via these organoids we can fuse organic and machine intelligence in new ways. As the research paper has it, this work may ‘give rise to silico-biological computational platforms that surpass the performance of existing purely silicon hardware.’ In the intelligence revolution we’re living through, it feels as though we just stormed a whole new barricade.

🗓️ Also this week

☀️ The White House is coordinating a five-year research plan to study ways to cool the Earth by reflecting sunlight back into space. The Office of Science and Technology Policy say the research will look at the costs and benefits of a number of plans, including a plan to spray reflective aerosols into the stratosphere.

🔋 Greece was briefly powered entirely by renewable energy sources this week. The Independent Power Transmission Operator said a mix of renewables, including wind and solar, for the first time provided 100% of the country’s energy for around five hours last Friday.

☄️ NASA confirmed that its Double Asteroid Redirection Test (DART) mission was a success. Scientists say their spacecraft collided with, and changed the course of, the Dimorphos asteroid.

👨💻 A Dutch court says it’s a human rights violation to demand employees work with their webcams on. In a case involving a Dutch telemarketer hired by a US firm, the court ruled that insisting on webcams is ‘in conflict with the respect for the privacy of workers’.

💊 Researchers say AIs can accurately predict the response of specific patients to anti-depressant medication. A new paper shows how an AI can predict response to the antidepressant Sertraline with 84% accuracy.

🚨 Police in Edmonton, Canada, used DNA phenotyping to generate an image of an unseen suspect. The image was created using DNA collected during the investigation; the police have no other images of the suspect. Critics point out that the technique does not take into account the suspect’s age and diet, or other environmental factors.

😱 A team of scientists called for urgent research into the nature and causes of civilisational collapse. Writing in the prestigious Proceedings of the National Academy of Sciences, the researchers say discussion of collapse has been dominated, so far, by philosophers and artists, and call for scientists to start studying ‘collapse mechanisms’ and how we can collectively adapt so as to avoid them.

🌍 Humans of Earth

Key metrics to help you keep track of Project Human.

🙋 Global population: 7,981,031,836

🌊 Earths currently needed: 1.7905796748

💉 Global population vaccinated: 62.9%

🗓️ 2022 progress bar: 79% complete

📖 On this day: On 14 October 1066 the Battle of Hastings marks the start of the Norman Conquest of England.

Synthetic Futures

Thanks for reading this week.

So it turns out that large language models are, among other things, accurate people simulators. The implications for the social sciences — including futures and foresight studies — are huge.

This newsletter will keep watching. And there’s one thing you can do to help: share!

Now you’ve reached the end of this week’s instalment, why not forward the email to someone who’d also enjoy it? Or share it across one of your social networks, with a note on why you found it valuable. Remember: the larger and more diverse the NWSH community becomes, the better for all of us.

I’ll be back next week. Until then, be well,

David.

P.S Huge thanks to Nikki Ritmeijer for the illustration at the top of this email. And to Monique van Dusseldorp for additional research and analysis.

New Week #102