Welcome to the mid-week update from New World Same Humans, a newsletter on trends, technology, and society by David Mattin.

If you’re reading this and haven’t yet subscribed, join 24,000+ curious souls on a journey to build a better future 🚀🔮

To Begin

This week, a scrapbook of examples that crossed my desk.

Given what’s unfolding right now it will come as no surprise: they’re all founded in AI.

Get ready for a glimpse of the incredible advances being made in text-to-world models.

Also, see DeepMind’s take on the iconic 20th-century political philosopher John Rawls. And seize your chance to collaborate with Canadian pop star Grimes.

Let’s go!

🖼 The worlds to come

Twitter users are sharing the 3D worlds they’re creating using new text-to-world models.

This user created a 3D dungeon world that — if you’re around my age — is a nostalgia-inducing homage to early 90s first person shooter games. It gives me strong Doom vibes.

This world was created using Runway AI’s powerful Gen-2 model, which launched last month.

The company co-created the text-to-image model used by Stable Diffusion, and just days ago launched its first app, which gives users mobile access to Gen-1.

Meanwhile, this YouTuber showcased an integration of ChatGPT into the virtual reality action role-playing game Skyrim VR. Via that integration it’s now possible to have natural, open-ended conversations with in-game characters.

Two weeks ago in New Week #119 I wrote about how Stanford researchers used an enhanced LLM to create simulated humans and set them loose in a virtual city called Smallville.

I speculated that we’d eventually see these kinds of simulated humans in video games; it’s already happening.

Want more generative world building? Check out this amazing new generative AI take on the classic text adventure game. Iconic examples such as Zork — released in 1977 — made clever use of pre-determined responses to give the impression of world exploration. Now, we can use GPT-4 to create an infinite text world, including multiple simulated personas and endless, open conversations.

⚡ NWSH Take: These text-to-world models are evolving by the week; we’re building the holodeck, and it’s going to take us to amazing places. Who will create the first consumer-facing, mass adoption platform for building immersive worlds that users can explore with their friends? // While the implications for the video game industry are vast, there are also deep philosophical issues in play here. The emergence of text-to-world models marks the end of a long journey that began with language. First we used language to represent the world around us. Now, we are drawing new worlds out of that language. It turns out that our writing was a hidden form of world building all along. If you want to dive really deep on all this see my ongoing essay series The Worlds to Come — the third instalment will arrive soon.

🙋♂️ Aligned position

Also this week, researchers at DeepMind published a new paper on the AI alignment question. Alignment is about ensuring that AIs act in accordance with our ethical norms and values.

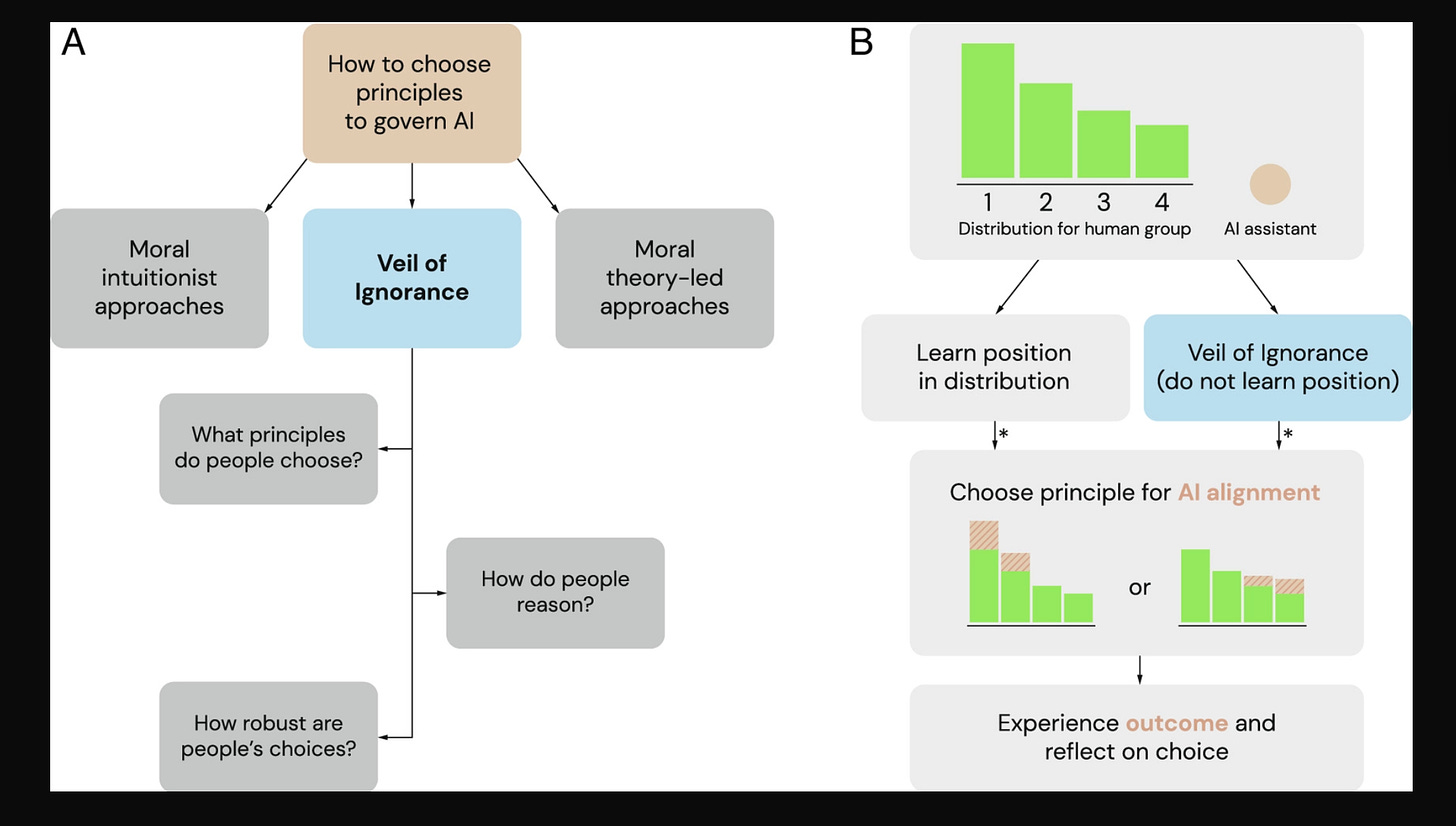

This new DeepMind paper focuses not on the technical challenges, but on another issue. That is, how do we agree on the values that we want AIs to align with?

The authors develop a framework for the selection of values based on a legendary thought experiment called the original position, created by the political philosopher John Rawls.

In this thought experiment a group of citizens are asked to devise a just society, which they will then join. To complete this task these imaginary citizens are whisked behind what Rawls calls the veil of ignorance, such that they have no idea who they will eventually be — their gender, race, affluence, anything — when they finally become part of the society they’re creating. Rawls argued that people behind the veil of ignorance would choose fair and equitable laws that prioritised the minimum wellbeing of the worst off.

The DeepMind researchers gathered 2,508 participants, and found that those placed in the imaginary original position were more driven by considerations of fairness, and did indeed leans towards AI alignment values that prioritised the worst off. They were also less liable to change their mind than those who were simply asked to come up with values they considered fair.

⚡ NWSH Take: Could the original position — known to every political philosophy undergraduate — enjoy an encore as a powerful framework for the selection of AI alignment values? The authors of this paper conclude by arguing that it could be just what we need. // The broader, submerged argument made by this paper, though, is also highly valuable. The mainstream discussion on alignment typically assumes that the values we should ask AIs to align with are obvious. Given that we humans have never been able to align with one another, this clearly isn’t the case. So who and what, exactly, are we seeking AI alignment with? We need better answers to these questions. And they’ll only come via a process of politics, in which competing values are traded off against one another. More thoughts on AI alignment from me here.

🎤 Force multiplier

Canadian pop star Grimes says songwriters and fans are free to use an AI synthesised version of her voice in their original music.

Inspired by the AI Drake song Heart on My Sleeve, which went viral last week, the singer says she’ll share revenues with any creators who leverage her AI-voice in their work.

⚡ NWSH Take: Soon enough, every artist, musician, writer will be able to train an AI model on their past work and then license that model for use by third parties. In this way, AI those artists to scale beyond themselves in previously unthinkable ways. Imagine GPT-Einaudi, or GPT-Banksy. And imagine that long after those artists have died, new creative outputs in their unique style continue to be made. In this way, AI makes possible a strange new kind of ghostly afterlife for artists and creatives. It’s already happening: back in New Week #21 I wrote about legendary South Korean folk singer Kim Kwang-seok, who was reincarnated using AI on a leading TV talent show. // Eventually, we'll come to view the life and work of an artist as only a kind of preliminary stage; one that trains an AI model on the artist’s unique style, perspective, and voice so that this style can live on, and create new works, forever. Will this be only a hollow, ersatz version of the work the artist created when alive? Or can AI really capture something essential about the creative spirit and worldview of a person? As a culture, we'll have to decide. It's going to be a fascinating journey.

One Step Beyond

Thanks for reading this week.

The emergence of AI text-to-world models makes for a strange twist in the ancient relationship between humans and language.

This newsletter will keep watching, and trying to make sense of it all. There’s one thing you can do to help: share!

If this week’s instalment resonated with you, why not forward the email to someone who’d also enjoy it? Or share it across one of your social networks, with a note on why you found it valuable. All you have to do is hit the button below. Remember: the larger and more diverse the NWSH community becomes, the better for all of us.

I’ll be back next week. Until then, be well,

David.

P.S Huge thanks to Nikki Ritmeijer for the illustration at the top of this email. And to Monique van Dusseldorp for additional research and analysis.

Any undergraduate political philosophy…you make that sound like it is common. You’re a very good writer and your subject matter is important, but that part about determining standards for AI is incomprehensible. And I suspect we both know it is an exercise in futility. I would like to hear your take on the Russians using AI to manage election campaigns they know are rigged to save money. That’s the weirdest AI story I’ve heard this week.